Governing AI agent identity: a practitioner's guide

A practitioner's guide to AI agent identity governance: threat model, discovery, EU AI Act and NIST AI RMF assessment, blast radius analysis, remediation playbook, and framework mapping tables.

Featured event: A CISO’s take

Join Jim Alkove and Ramy Houssaini to learn how forward-thinking security teams are addressing Enterprise AI Copilot risks.

Introduction: the identity layer that AI governance tools can't see

Enterprise AI governance conversations tend to focus on what employees do with AI tools: which models they use, what data they paste into prompts, and whether outputs comply with acceptable use policy. This is AI usage governance, and most organizations now have a set of tools (e.g., browser monitoring, data loss prevention for GenAI, prompt inspection) to address these usage concerns.

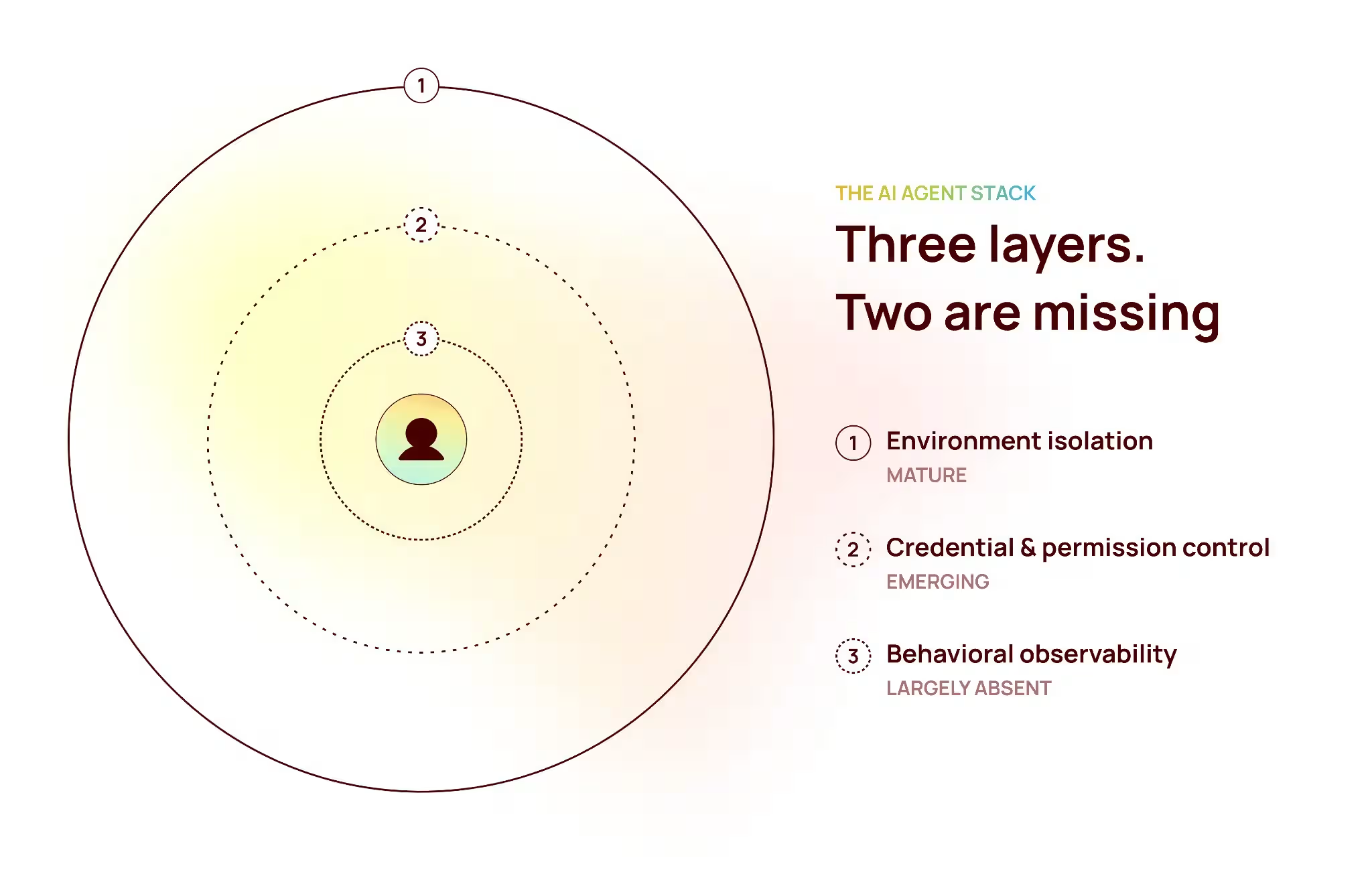

But there is a second AI governance problem: what AI agents can access and do. This is AI identity governance. AI identity governance is more structurally complex. It maps more directly to regulatory requirements. And most governance tools cannot see it.

Here’s a quick look at how AI identity works: When you deploy an agent in Microsoft Copilot Studio, Microsoft 365 creates a service principal with an OAuth credential and grants it whatever permissions you specify. The same pattern applies in Salesforce Agentforce, Azure AI Foundry, ServiceNow, and GitHub Copilot. That identity — the service principal, managed identity, or app registration — is the agent's footprint in your enterprise. It is how the agent accesses data, executes actions, and interacts with other systems.

A CISO needs both layers: usage governance to see what people are doing with AI; identity governance to see what AI agents can do to your enterprise. The two disciplines address different attack surfaces, different regulatory obligations, and different operational questions.

But most organizations lack the necessary visibility to manage AI identity governance at speed and scale. They don’t know how many AI identities exist, what they can access, who owns them, or whether they are still in use. This guide covers those blind spots — and how to build the capabilities necessary for effective AI identity governance.

The AI agent identity threat model

Understanding AI agent identity risk starts with a clear threat model. AI agents share characteristics that create attack surfaces distinct from those of human users: they authenticate continuously, they do not trigger MFA prompts, they have no manager relationships, and they persist in your environment indefinitely without a termination process.

Six threat vectors define the identity-specific risk landscape.

Over-provisioned permission scope

AI agents are routinely granted more access than they need. Low-code deployment platforms make it faster to request broad permissions than to scope them precisely. A developer building a SharePoint summarization agent will accept the default permission suggestions, which may include Directory.ReadWrite.All and Application.ReadWrite.All, because scoping errors slow development and broad permissions eliminate debugging friction. The agent ships with tenant-wide write access for a task that needs read access to a single site.

In a representative assessment, Oleria found four AI agents holding 12 high-privilege scopes collectively. Usage across all 12: zero. The utilization gap was 100% — a direct failure of the principle of least-privilege access and a specific non-compliance finding under EU AI Act Article 10.

Dormant high-privilege agents

An agent built for a proof-of-concept that never moved to production does not remove itself. It persists as a service principal in your identity provider, holding whatever permissions it was originally granted, owned by an account that may not remember it exists.

Dormancy amplifies risk rather than reducing it. A dormant agent has no behavioral baseline. Security operations has no context for what normal activity looks like. When the agent is eventually compromised and begins acting, the activity looks novel. Detection is slower. The response window narrows.

In the assessment environment: agents had been dormant for 138 to 365 days. The longest-dormant agents held the highest-privilege scopes.

Ownership concentration

Enterprise AI agent deployments consistently show a small number of administrator accounts owning a large number of agents. In the representative assessment, a single Global Administrator account owned all four agents under review.

Ownership concentration turns a single account compromise into an estate-wide event. One successful phishing attack, one session token theft, one credential stuffed from a breach database: the attacker does not just compromise an account. They compromise every agent that account controls, simultaneously, with no additional effort.

Identity chaining and delegation

Agents do not always act alone. An orchestrator agent can delegate tasks to sub-agents. An agent can impersonate a human user through OAuth delegation. A pipeline of agents can pass context and credentials from one to the next without any human in the chain.

These delegation chains create risk paths that are invisible when you examine any single agent in isolation. A sub-agent with narrow permissions may be reachable from an orchestrator with broad ones. The lineage matters as much as the individual node.

Lateral movement through shared access

Agents that share permission templates, ownership, and credential patterns create lateral movement vectors. Compromising one creates a pivot point into all of them.

In the assessment, the Confidential SharePoint Agent shared its ownership chain and scope patterns with three sibling agents. Beyond those four, 30+ additional high-privilege NHIs in the same environment shared Microsoft Graph API access with similar privilege profiles. A single compromise does not stay contained to one agent.

Owner account compromise

The security posture of the account controlling an agent is the minimum floor for the security of the agent itself. There is no version of a well-governed agent owned by a poorly-secured account.

In the assessment: the owner account used email and SMS multi-factor authentication, the most commonly bypassed MFA method. The account had generated suspicious API traffic 51 days before the assessment. Credential rotation had not occurred in 149 days. Every one of these signals flows directly into the agent's blast radius score under the Owner Risk dimension.

Discovery: building your AI agent inventory

You cannot govern what you cannot see. The foundation of AI agent identity governance is a complete, continuously maintained inventory of every agent in your environment. Without it, every other governance activity operates on incomplete information.

What the identity graph reveals

AI agents exist in your identity provider as service principals, managed identities, and OAuth app registrations. These objects are queryable today through APIs like Microsoft Graph. Oleria's identity-native discovery identifies AI agents via model endpoint access patterns, orchestration signatures, and the nhiType: AIAgent classification — without requiring agents to self-register, business teams to submit tickets, or security teams to conduct manual interviews.

A read-only Graph API integration delivers an initial inventory in under 60 minutes. That inventory includes agents deployed years ago, agents created by employees who have since left, and agents built for pilots that were never officially launched. The identity graph sees them all.

For example, in an illustrative scenario, an organization scans its environments and uncovers 1,112 total non-human identities. Only 40 — 3.6% — had documented owners. 96% were ownerless. 934 were dormant. What the team believed they had visibility into turns out to be a small fraction of the actual NHI estate.

What to capture for each agent

A complete AI agent inventory entry should capture the following for each discovered agent:

The instinct when shadow agents are discovered is to build a registration process: require teams to submit a request before deploying an agent. It is a reasonable policy. In practice it fails for two reasons.

First, low-code platforms make deployment faster than governance processes. The agent is live and holding permissions before the ticket is reviewed. Second, registration policies do not reach the existing estate. Every agent created before the policy existed remains invisible, and that population is almost always larger than the new-deployment volume.

Discovery must be automated, continuous, and retroactive. The identity graph does not care when an agent was created.

Assessment: EU AI Act and NIST AI RMF through the identity lens

Once you have an inventory, you need to assess each agent against the regulatory frameworks your auditors will apply. Both the EU AI Act and the NIST AI Risk Management Framework map directly to identity evidence. The data you need for compliance is largely already available in the identity layer.

EU AI Act: article-by-article identity mapping

The EU AI Act's high-risk provisions become fully enforceable August 2, 2026. The compliance evidence required for each article maps almost entirely to identity data. In a representative assessment, Oleria found an overall EU AI Act compliance score of 7% — 11 of 15 assessed controls failing — with the following article-level findings:

The core finding: most EU AI Act compliance evidence lives in the identity layer. Organizations that govern AI agent identity properly are strongly positioned to accelerate EU AI Act compliance. For a full walkthrough of what each article requires operationally, see EU AI Act compliance for AI agents.

NIST AI RMF: identity governance as the foundation

The NIST AI Risk Management Framework organizes AI governance into four functions: GOVERN, MAP, MEASURE, and MANAGE. In a representative assessment, overall NIST AI RMF maturity scored 1.2 out of 5.0 — classified as LOW maturity across all four functions.

Every gap has an identity component. GOVERN requires knowing what agents exist and who is accountable for each. MAP requires documenting purpose against permission scope. MEASURE requires activity logs to track behavior over time. MANAGE requires owner accountability chains for incident response.

Blast radius analysis: a step-by-step process

Blast radius answers the most operationally important question about any AI agent: if this agent were compromised right now, how bad would it be? The full scoring methodology and a detailed worked example are covered in AI agent blast radius: how to quantify AI risk and communicate it to your board. This section covers the six-step process for practitioners running their own assessments.

Step 1: enumerate permissions

Pull the complete permission manifest for the agent from your identity provider. List every scope granted. Distinguish between delegated permissions (the agent acts on behalf of a user) and application permissions (the agent acts as itself). Application permissions with tenant-wide scope — Directory.ReadWrite.All, Application.ReadWrite.All — carry inherently higher risk than delegated permissions scoped to a specific resource.

Step 2: identify unused scopes

Cross-reference permissions granted against permissions used, drawn from activity logs. Unused scopes are your first remediation target and your clearest Article 10 non-compliance signal. In the assessment environment: 12 high-privilege scopes granted, zero used. That is a 100% utilization gap for this agent.

Step 3: map the ownership chain

Who owns the agent? What role do they hold? How many other agents do they own? What is the security posture of their account: MFA type, credential age, and recent suspicious activity? The owner chain is both a governance dependency and the most reliable attack vector against the agent.

Step 4: discover sibling agents

Identify other agents that share the same owner, the same permission templates, or both. Siblings create lateral movement paths. A compromise that targets one can pivot to all of them if they share credentials or trust relationships. Treat siblings as part of the same risk unit, not separate assessments.

Step 5: trace lateral movement potential

Given the agent's permissions, map the attack paths available to an adversary. Application.ReadWrite.All enables an attacker to register new malicious applications and escalate privileges. Directory.ReadWrite.All enables creating new admin accounts. Chat.Read.All grants access to every Teams conversation in the organization. Trace each high-privilege scope to its downstream consequence before scoring.

Step 6: score blast radius

Score each agent across five dimensions on a 1 to 5 scale. Aggregate to a single severity rating.

Aggregate score maps to severity: 1.0-2.0 Low, 2.1-3.0 Medium, 3.1-4.0 High, 4.1-5.0 Critical.

Example: Confidential SharePoint Agent

Remediation playbook

Not all remediations carry equal weight. Prioritize by blast radius severity and regulatory requirement. The sequence below maps to the EU AI Act articles most likely to surface first in an audit.

Immediate (within 24 hours)

- Disable dormant Critical agents. Any agent scoring Critical on blast radius with 90+ days of dormancy should be disabled pending formal review. Risk cost of leaving it active: Article 15 and 9 exposure plus a live attack surface.

- Flag owner accounts with weak MFA or suspicious activity. An agent owned by a potentially compromised account is effectively already compromised. Treat owner account remediation as equivalent urgency to the agent itself.

Regulatory mapping: Art. 15 (cybersecurity), Art. 14 (human oversight)

Critical (within 1 week)

- Remove all unused high-privilege scopes. Revoke any scope that has never been exercised. This is the single highest-impact remediation for both security posture and Article 10 compliance. An agent that has never used Application.ReadWrite.All in its operational lifetime does not need it.

- Implement least-privilege for active agents. Reduce permission scope to the minimum required for the documented purpose. Require explicit justification for any tenant-wide permission going forward.

Regulatory mapping: Art. 9 (risk management), Art. 10 (data governance)

High (within 30 days)

- Remediate sibling agents. Agents sharing scope and ownership patterns with a Critical agent require the same treatment. Remediating one while leaving its siblings in place is not remediation.

- Upgrade owner authentication. Move all agent owner accounts from email/SMS MFA to phishing-resistant authentication: FIDO2 security keys or Windows Hello for Business. This is the single change that most reduces the Owner Risk dimension of blast radius scoring.

- Create technical documentation for every active agent. Document purpose, capabilities, permission justification, and performance expectations. This satisfies Art. 11 and creates the foundation for recertification reviews.

Regulatory mapping: Art. 4 (AI literacy), Art. 11 (technical documentation)

Medium (within 90 days)

- Establish lifecycle governance. Define what happens when an agent owner leaves the organization. Define dormancy thresholds that trigger automated review. Build decommissioning procedures with full audit trails.

- Register high-risk agents. Complete EU AI Act Article 49 database registration for agents that qualify as high-risk under Article 6.

- Implement human-in-the-loop workflows. For agents with write access to enterprise systems, require human approval for consequential actions. Document the approval process.

Regulatory mapping: Art. 14 (human oversight), Art. 26 (deployer obligations), Art. 49 (database registration)

The 90-day recertification model

Recertification applies the same logic to AI agents that access reviews apply to human users: at regular intervals, confirm that access is still needed, still appropriate, and still owned by the right account. For AI agents, 90 days is the recommended cycle.

Each recertification cycle should confirm:

1. The agent is still actively needed for a documented purpose

2. Its permission scope is still the minimum required

3. The owner account is still appropriate and actively monitored

4. No new siblings or delegation chains have appeared since the last review

5. All regulatory documentation is current

Continuous governance: from quarterly assessment to real-time signal

The assessment and remediation cycle above is necessary. It is not sufficient.

AI agents are deployed continuously. Permissions drift. Owners change. New agents appear in your environment every time a business analyst publishes a Copilot Studio workflow. A governance model built around quarterly reviews will always be operating on stale data.

Continuous AI agent governance requires four capabilities working together:

- Standing governance agents: Automated processes that run continuously against your identity graph, detecting new agents within hours of deployment, flagging permission scope changes, alerting on owner account changes, and triggering recertification when dormancy thresholds are crossed.

- Pre-enriched alert context: When a security alert fires on an AI agent, the blast radius score, ownership chain, sibling map, and permission history should be immediately available. Not assembled manually over 30 to 60 minutes. Available in the first conversation.

- Compounding identity graph: Every assessment, remediation, and recertification adds to the graph. Over time you build a historical record of every permission change, ownership transfer, dormancy event, and remediation action. That record is your regulatory evidence base.

- Compliance as a continuous signal: EU AI Act Article 72 requires post-market monitoring. That is a continuous obligation, not a point-in-time audit. Organizations that build continuous monitoring into their AI governance model are not just more secure. They are structurally more compliant than organizations running quarterly reviews.

The shift is from quarterly assessments to continuous governance — because AI agents are deployed continuously, and your attack surface doesn't wait for your review cycle.That requires automating what cannot be done manually at the speed AI agents are being deployed. For a broader view of how this connects to identity security posture management, see Oleria's ISPM overview.

Framework mapping reference

The tables below map AI agent identity governance controls to both NIST CSF 2.0 and the NIST AI Risk Management Framework. Use these as a printable reference card for GRC reviews, board presentations, and regulatory audit preparation. This is the identity-governance equivalent of what usage-governance tools provide for the prompt and output layer.

NIST CSF 2.0: AI agent identity governance mapping

NIST AI RMF: AI agent identity governance mapping

Conclusion: identity is the foundation of AI governance

Usage governance tells you what employees are doing with AI. Identity governance tells you what AI agents can do to your enterprise. Both are necessary. Only one maps directly to EU AI Act requirements, NIST AI RMF maturity scoring, and the regulatory questions that will define the security conversation in 2026.

The gap between where most organizations are today and where they need to be is real. This gap is recoverable but not through quarterly audits and manual registration forms. Most evidence required for regulatory compliance is already present in the identity graph. The question is whether you are surfacing it, governing it, and using it to get ahead of the problem.

Organizations that close this gap will not rely on manual processes and quarterly audits. Instead, they will treat AI agent identity as a first-class governance object — discoverable via the identity graph, accessible against regulatory frameworks, remediable through a prioritized playbook, and continuously monitored. That is what adaptive identity governance looks like for the AI era. The evidence is already in your identity graph. The question is whether you're using it.