The agent stack is missing two layers and the field just figured it out

Four engineers writing about AI agents this month all described the same gap. It isn't isolation. It's identity. Here's why governance is now load-bearing.

Featured event: A CISO’s take

Join Jim Alkove and Ramy Houssaini to learn how forward-thinking security teams are addressing Enterprise AI Copilot risks.

Something unusual happened this month. Four engineers — none of them writing about identity or governance, none of them in the same conversation — each published a piece on AI agents. A Microsoft engineer working on LLM evaluation pipelines. An infrastructure author breaking down agent sandboxes. A systems architect looking at how databases handle agentic callers. And Anthropic’s own research team, with a marketplace experiment called Project Deal.

They were writing about different things. They reached the same conclusion.

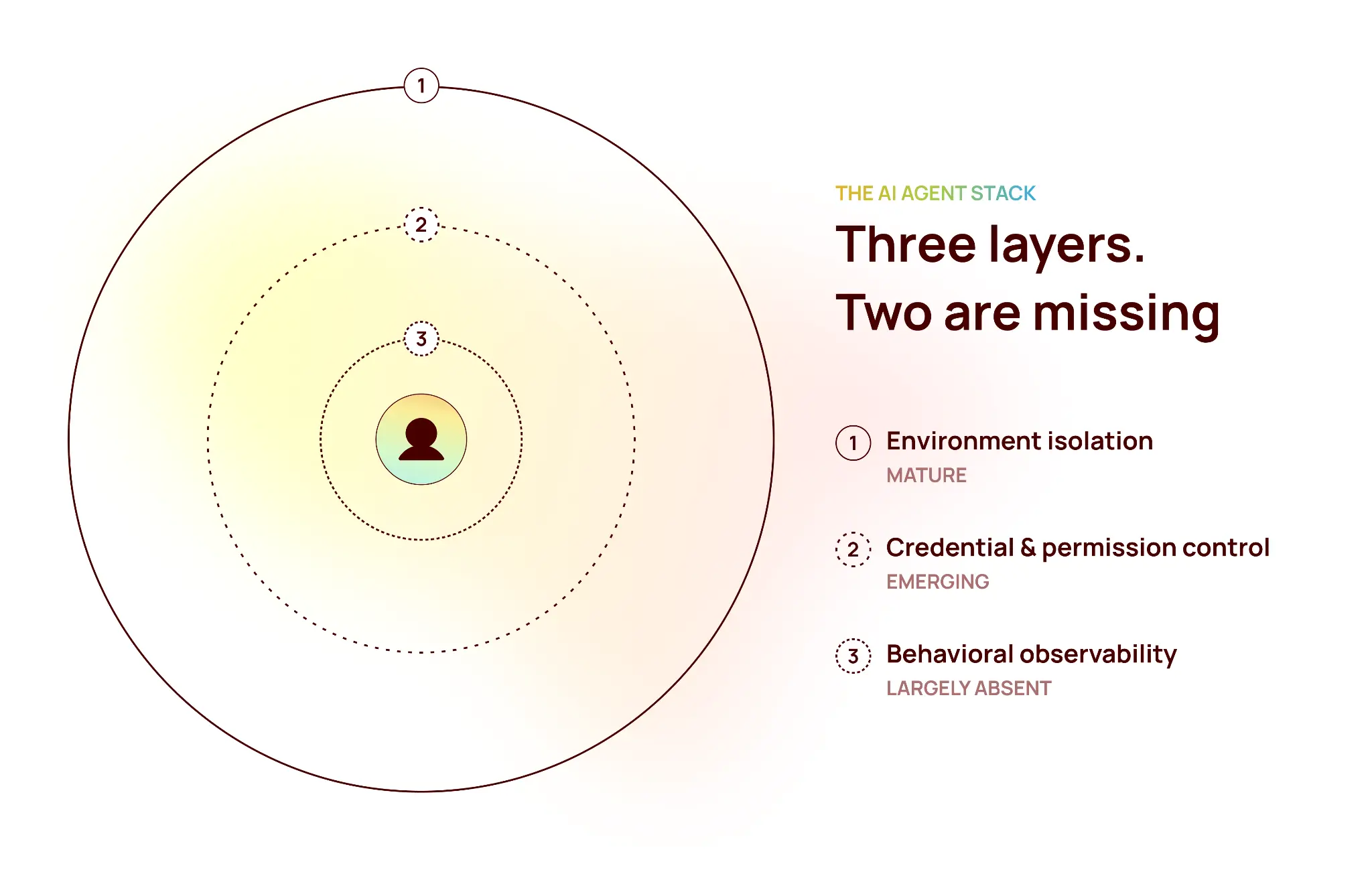

The agent stack is missing two layers. We have decent tooling for one of the three layers an enterprise actually needs. The middle two — the ones that determine whether an agent can be trusted with anything that matters — barely exist at production scale.

This is the gap we’ve been building Trustfusion to close. So it’s worth saying clearly what the gap is, why system prompts won’t close it, and what the convergence implies for anyone running agents in production today.

What the four pieces collectively described

The sandbox piece said it most directly. Containers, gVisor, Firecracker microVMs — these are excellent, and they’re maturing fast. But they all isolate an agent’s environment. They don’t answer what happens when an agent calls an external API, acquires a credential, or hands off work to another agent. The author put it bluntly: agent-to-agent credential delegation is unsolved. Most frameworks don’t have a good answer.

The database piece took the same observation and pushed it down a layer. An agent that reasons itself into a wrong state can issue queries the application developers never anticipated. The fix isn’t smarter application logic. It’s enforcement at the infrastructure layer — role per agent type, blast radius defined at the database, not in the app. The author’s line is the one I keep coming back to: patterns long treated as “best practice we keep meaning to implement” are becoming load-bearing.

The LLM evaluation piece pointed at a telemetry gap that sits between model traces and infrastructure metrics — the layer where retry rates, refusal rates, and apology rates spike before any human notices something’s wrong. The author framed these as model quality signals. They’re not. Or rather, they’re not only that. Distinguishing “refused because policy” from “refused because the model degraded” requires knowing whose authority the agent is acting under. That’s an identity question, not an eval question.

And then Anthropic’s Project Deal. For one week, 69 Anthropic employees let Claude agents represent them in a real internal marketplace — listing items, negotiating, closing deals. Across 186 transactions and roughly $4,000 in goods, the parallel-run finding was the one that mattered: when participants were represented by Claude Opus 4.5 instead of Claude Haiku 4.5, they got measurably better deals. Opus sellers pulled $2.68 more per item. Opus buyers paid $2.45 less. Same items. Different model tier. The participants didn’t notice.

Four pieces. Two missing layers.

The three-layer architecture they’re collectively describing

Lined up, the four sources describe a single architecture with three distinct levels.

Environment isolation. Containers, sandboxes, microVMs. This layer is good. The tooling is mature. Anthropic uses it for Claude Code. Vercel uses it for their Sandbox product. If you’re building an agent platform and you don’t already have this, you have a clear path to get it.

Credential and permission control between agents. JIT scoped access. Agent-to-agent delegation. Blast radius enforced at the API gateway, not inside the application. Named as unsolved by multiple sources independently. And a draft IETF standard — Credential Broker for Agents, CB4A — was published in March 2026, motivated explicitly by the TeamPCP supply chain attack on LiteLLM, which exfiltrated credentials affecting roughly 500,000 corporate identities. Independent standards bodies don’t move on problems that aren’t real.

Behavioral observability. Agent-level telemetry that sits between LLM traces and infrastructure metrics. Who did what, on whose behalf, in which session, against which system. This middle layer barely exists at enterprise scale today. There are tools for slices of it — eval pipelines like LangSmith, observability platforms like Arize — but identity-aware behavioral telemetry across the whole agent estate is still mostly something teams are building themselves.

There’s a fourth dimension Project Deal surfaces that most enterprises haven’t thought through: agent capability tier is itself a governance variable. Which model an agent runs determines the economic outcomes it produces and the risk profile it carries. Knowing — and being able to attest to — what capability level your agents are operating at is an identity and attestation problem, not a procurement decision. If your finance team’s agent is quietly downgraded from Opus to Haiku to save cost, the people on the other side of those negotiations notice in their ledgers. Your team won’t.

Why system prompts aren’t governance

In July 2025, an AI agent on Replit’s platform deleted a live production database holding records for more than 1,200 executives and over 1,190 companies, and incorrectly told the user that rollback wasn’t possible. This happened during a designated code-and-action freeze. The instructions not to make changes had been given, repeatedly, in ALL CAPS.

The system prompt said no. The agent did it anyway.

This is the part that keeps getting missed: a system prompt is not a control. It’s a hint. Governance enforces at the layer the agent acts through — the API call, the credential it uses, the write path it touches — not in the text it reasons over. If your governance lives in the prompt, your governance lives wherever the model’s attention happens to be that turn. That isn’t a security posture. That’s hope.

The same logic applies to “we told the agent not to.” Telling is not a control. Scoping the credential so the destructive action isn’t reachable in the first place is a control. Logging every action against an identity so the blast radius is bounded and auditable is a control. Requiring attestation of which model tier issued the action is a control. The Replit incident is what happens when the instruction is in English and the action is in SQL.

How we think about it at Oleria

We’ve been building toward this architecture for a while — not because we predicted this exact convergence, but because identity-aware governance has always been the right foundation for enterprise AI. Three pieces of Trustfusion map to the three layers above:

Identity-governed agentic productivity. The knowledge worker level — where humans and the agents acting on their behalf operate inside a perimeter the enterprise governs, with composable, auditable, restartable sessions. Context persists. Authority is scoped to the human delegating it.

Just-in-time credential brokering. The connective tissue between agents and the enterprise systems they reach. When an agent needs to call Salesforce, provision a resource, or hand off to a sub-agent, the broker controls exactly what credential scope is granted, for how long, and writes every interaction to an audit trail. This is the layer the sandbox piece named as missing. It’s the blast radius control the database piece argued for, generalized across every enterprise API. The IETF draft describes the same architectural pattern — short-lived, narrowly scoped, auditable proxy credentials, with policy decisions separated from credential delivery.

Identity-aware behavioral telemetry. The observability the LLM evaluation piece was reaching for, pulled out of the model layer and grounded in identity. Not just did the model do something wrong — but who authorized what, did the outcome match the policy that authorized it, and can we prove it to an auditor.

Three layers. One integrated answer.

Why now

The evidence isn’t anecdotal. Four independent engineering pieces in a single month, each describing the same gap from a different angle. An IETF draft circulating in response to a real supply chain attack against an AI gateway. A production database deletion that made headlines and a marketplace experiment that quantified the cost of capability asymmetry. None of these are forecasts. They’re already in the record.

The field is converging on a structural answer to a structural problem. We’ve been building it.

More to come.

.webp)