If you want AI to move faster, fix identity first

AI agents can't move faster than your ability to provision access. Learn why fragmented identity is the real AI deployment bottleneck — and what a unified solution looks like.

Featured event: A CISO’s take

Join Jim Alkove and Ramy Houssaini to learn how forward-thinking security teams are addressing Enterprise AI Copilot risks.

Around the world today, CEOs are asking, “Why aren’t we deploying/integrating AI faster?” And one of the top answers to that question is that the sluggish pace has something to do with security — too risky to go faster, right?

While security is certainly part of the challenge, the details might surprise you. The real velocity limiter is all of the human bottlenecks in the machine speed of AI agents.

In order to maximize the productivity benefits of AI Agents, we need to enable them to have access to large swaths of organizations’ IT systems and data — while maintaining security, privacy, integrity and availability. Because AI agents don’t fit into existing identity systems and processes, organizations are relying on humans to manually give AI agents necessary access to data and systems. That manual access provisioning is a key reason that AI deployment feels absurdly slow. It’s also how AI agents are ending up with far more access than they should (and getting into trouble with that over-provisioned access).

Put simply, no organization will realize the transformative promise of AI until it can match the speed and autonomy of AI Agents with the speed and autonomy of access to systems and data. What do we need to enable that match? A way to assign and manage AI agent identities — autonomously, securely, and at machine speed.

The hidden AI bottleneck (and AI risk): manual access provisioning

AI agents are ready to deliver the greatest step function productivity change since the industrial revolution. But for an AI agent to succeed in any task you might give it, it first needs access to the data and the systems required to accomplish that task.

Today, even in the most sophisticated organizations, that access is being provisioned manually — by humans. We have this incredible technology capable of exponentially increasing productivity, and it’s waiting on humans to literally find and click the right buttons to get it started.

This is clearly absurd from an efficiency standpoint, foremost because most organizations still struggle to efficiently provision access for human workers: New employees wait days (even weeks) for IT to get through long queues and give them the access they need to start doing their jobs.

But it’s equally ludicrous from a security standpoint. Just as with human workers, manual provisioning errs on the side of giving more access (IT doesn’t want an angry worker having to come back to request additional permissions). Over-provisioning has long been a big problem for human users, and it’s now a big problem with non-human identities, too.

The risk of over-provisioning is fundamentally different with AI agents. Unlike service accounts, agents aren’t attached to static pieces of software. AI Agents are autonomous goal-seeking entities. They use any access they have to help them achieve their goal, and sometimes even go so far as to recruit their human partners to collaborate with them in bypassing access controls that get in their way. They have no sense of what they should or should not do; if they have access, they use it. They search through access permissions looking for paths forward — like the dinosaurs in Jurassic Park, testing the fences until they find a way through. Unlike the dinosaurs (or human users), they do it at machine speed — so the damage is done long before you realize an agent has gone off the rails. I now regularly talk to CISOs who’ve experienced incidents because of this.

This isn’t an access problem. It’s an identity and access problem.

On the surface, this looks like an access problem: AI agents need access to systems and data, so we focus on how to provision that access faster, how to control it more tightly, and how to reduce the risk of over-permissioning.

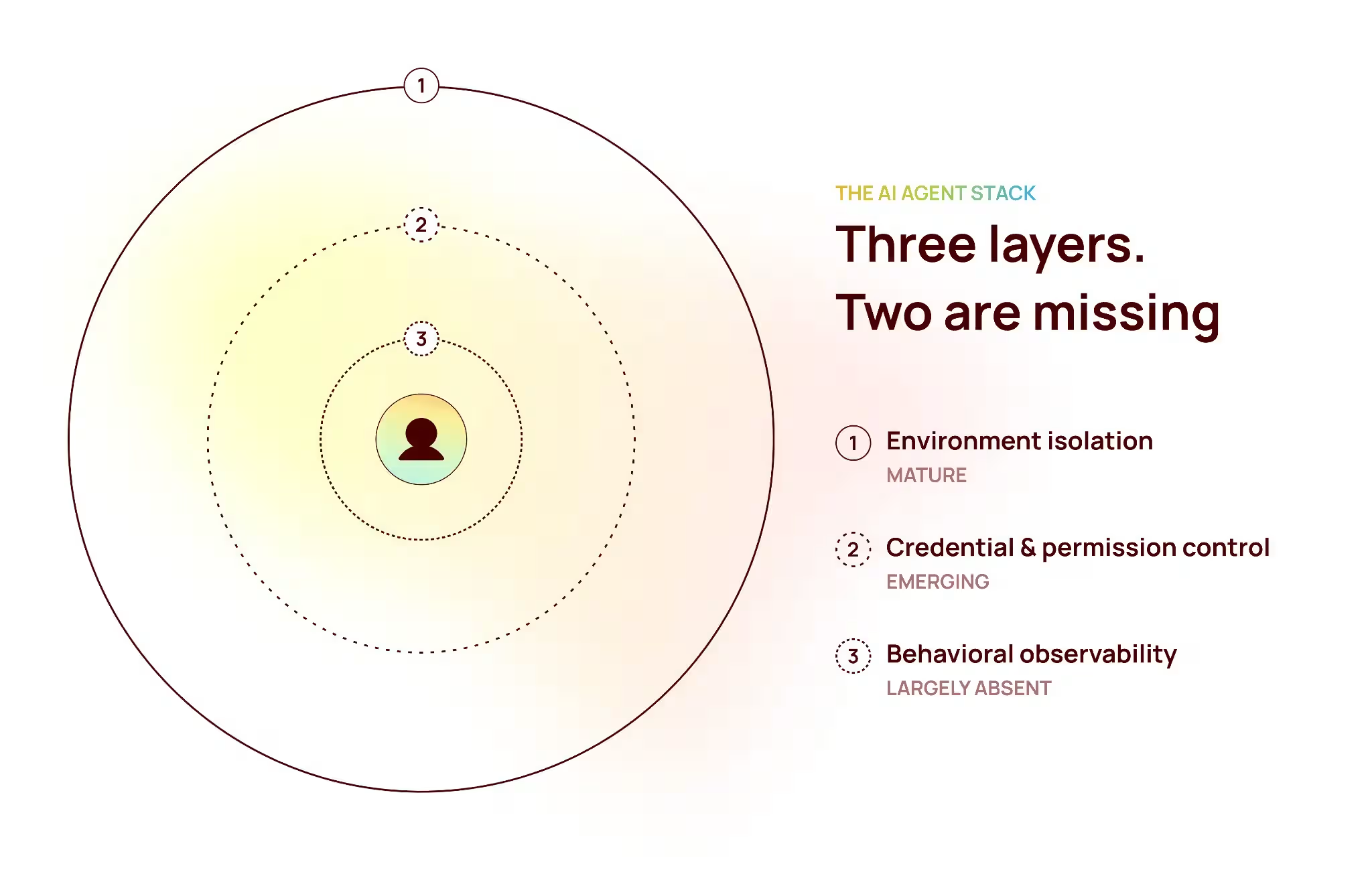

But the fundamental issue is that AI agents don’t have a defined, end-to-end identity and access story in the governance sense. Today's AI agent identities consist of a loose federation of scattered artifacts including service principals, OAuth grants, API keys, and tokens issued by different platforms, with no unified representation of what they are or how they should be governed or used.

Without that foundation, every access decision is a one-off judgment call: You either slow things down to be careful, or you over-provision to avoid friction. Most organizations are doing both right now, which is why AI deployment feels stalled and risky at the same time.

But with that solid identity foundation, access becomes something you can automate, manage, and trust. That’s how you unlock both speed and control.

You can’t move fast with fragmented identity.

Even if you accept that identity is the foundation, there’s a second challenge: in most organizations, identity is fragmented. Human identities live in one system. Non-human identities are spread across vaults, cloud platforms, and application directories. Agents need access to multiple systems, each with its own way of issuing and managing credentials. And no single system has a complete view of an agent's identity, access, and usage.

The problem with this fractured approach is that governance depends on having the full context. For example, with human identities we look at the job they were hired to do to put together the set of applications and corresponding permissions that enable them to accomplish their day to day work. Today this analysis is manual. Even if the provisioning of the access has been automated using an Identity Governance tool, the approvals are manual for nearly every organization.

With AI agents, we lack the business purpose typically associated with an employee’s job code, so manually determining and approving appropriate access can’t possibly keep pace with the speed and scale of AI agents.

To properly manage AI agent identity for both productivity and security, organizations can’t just bolt on another point solution that’s focused exclusively on AI identity. They need a unified identity layer that brings together identity for every system into a single, consistent model — a system of record for all identity, much like modern CRM systems are the system of record for all customer data.

What a system built for AI speed looks like.

Once identity is unified, the shape of a real solution becomes clearer. Instead of a patchwork of disconnected controls, you have a system that can consistently, instantly answer the same set of questions for every agent in the environment.

At a practical level, that system needs to deliver five things:

- Clear identity and ownership: Every agent must have a single identity strongly linked to the agent’s code and tied to an accountable human or team. These parallel what we do for human workers today and provide governance with 1) an anchor to manage all of the agent's access and 2) a responsible party when something goes wrong.

- An explicit lifecycle: Agents don’t have natural joiner-mover-leaver events like humans do, so the lifecycle has to be explicitly defined. You need to track when agents are created, how they change over time, and when they should be retired. Access that outlives purpose is one of the most common (and preventable) sources of risk.

- Visibility: It’s not enough to know what applications an agent can access. You need to understand everything an agent can do inside those systems — and see what it has done over its lifetime. That’s what turns blast radius into something you can measure and reduce.

- Governance grounded in context: Access reviews, policies, and certifications only work if they reflect reality. That means evaluating agents as identities with purpose, ownership, and behavior — not just as credentials with permissions attached.

- Fast, informed incident response: When an agent is involved in an incident, teams need immediate clarity on who owns the agent, what the agent could have accessed, what actions it actually took, and the business impact of those actions. With that context, containment becomes precise and actionable and investigations complete much more rapidly.

These capabilities complement each other, fitting closely together to form a stronger AI governance system. It’s like a Jenga tower: Take one out, and your tower is immediately less stable. Take two out, and that tower gets really wobbly. Too many organizations are working with an incomplete AI governance solution that’s only a sneeze away from toppling.

Why Oleria built a fundamentally different identity solution.

The speed and scale of AI agents completely outpace any organization’s ability to adapt an existing patchwork identity governance framework to accommodate agent identities. But at the highest level, the visibility and context gaps aren’t new. In fact, they’re the same gaps that most organizations still struggle with as they try to govern human and non-human identities independent of AI. These familiar gaps just become more glaring — and more consequential — as agents scale and operate at machine speed.

That’s why Oleria’s approach is unique. Because we didn’t set out to build a new AI identity solution to bolt onto existing, fragmented identity stacks. We spent the last three years building a platform to universally solve the identity problem — period. Our platform eliminates fragmentation and creates a single system of record, through a single identity graph that brings together human, non-human, and AI agent identities, along with their actual access and activity data.

That unified foundation naturally encompasses AI agent identity, and effective governance follows: Agents can be discovered because all identities appear in a single system of record. Ownership can be established and enforced because identities are attributable. Lifecycle can be managed because the stages are explicit. Access can be evaluated in its global context because all access relationships are connected. And incident response accelerates because responders have instant access to the context and can understand and prioritize based on what’s at stake.

That’s the difference between trying to govern AI on its own and making it a seamless part of the same identity system that your existing human and non-human identities are built on.

Identity will determine how fast you can move with AI.

No matter the organization, no matter the industry, one of the basic jobs of every CEO is to figure out how to get quality outcomes, faster, at a lower cost. AI is a tool that promises to give you all three. But the reality is AI agents can’t move faster than the organization’s ability to grant access to systems and data. And today, we’re still provisioning access like it’s 2005 — tickets, approvals, manual configuration.

From what I heard at RSA 2026, security leaders are starting to recognize that identity is the missing key to confidently accelerating AI deployment. The conversation is moving away from surface-level questions about AI usage and toward something much more fundamental: How do we give an AI agent an identity — and govern that identity at the same speed it operates?

With that foundation, the future of AI agent deployment looks very different: You define what the agent is supposed to do. You define your risk tolerance — how tightly or loosely that agent should be constrained. And then the system can handle the rest. Access is provisioned dynamically, scoped to the task, adjusted continuously, and revoked automatically. The agent gets exactly what it needs to accomplish the goal — no more, no less — and it all happens at machine speed.

It’s no different from the human side of businesses today. You don’t tell a senior leader how to execute on an objective. You define the objective, allocate resources, and expect outcomes. Agents should work the same way.

And the only way that model works is if identity is doing the heavy lifting. Identity is what makes access dynamic, governance continuous, and execution trustworthy — at enterprise scale and machine speed. It’s what allows organizations to accelerate AI without losing control.

Which makes this moment pretty clear: As organizations rethink AI identity and access, they can’t afford to just add another layer onto their fragmented identity stacks. They need to finally establish a trusted system of record for identity that’s ready to support everything that comes next. Because this transition will happen fast — and those that get AI identity right now will move faster than everyone else.

See how Oleria gives you a unified identity foundation to deploy and govern AI agents with speed and confidence.